A Practical Playbook for Modernization and Operations

Over the last quarter, we took a hard look at how AI-driven efficiencies in federal IT are being applied across our contracts—from modernization and operations and maintenance (O&M) to cloud migration, PMO support, and cybersecurity.

The conclusion was clear:

AI belongs in the core of delivery—applied intentionally, responsibly, and with measurable outcomes.

We formalized how Continuum Automation Framework capabilities are applied across:

- O&M enhancements

- Modernization and refactoring

- Greenfield development

- Cloud migration

- PMO automation

- Cybersecurity and ATO support

Each solution scenario is mapped to the right capability, creating a more predictable, scalable delivery model.

Embedding AI Into Federal IT Delivery Models

This structured approach enables us to:

- Deliver more competitive firm-fixed-price (FFP) programs

- Reduce FTE dependency while maintaining output

- Expand toward X-as-a-Service delivery models

- Integrate modernization directly into O&M cost structures

The focus is clear: engineering efficiency into federal IT delivery.

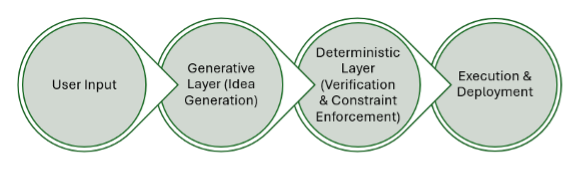

AI-Assisted Development: Governed Flow Coding

A core part of the playbook is how we approach AI-assisted software development.

We standardize on Flow Coding—a generate-and-verify model where:

- AI accelerates development

- Developers maintain full ownership of architecture and quality

Why governance drives results

AI productivity gains vary based on:

- Codebase maturity

- Architectural discipline

- Developer experience

- Technical debt

In well-structured environments, productivity gains can reach 2–3x.

In complex legacy environments, results depend on how effectively governance and standards are applied.

Our playbook incorporates:

- Conservative efficiency assumptions

- Tiered productivity models

- License cost considerations

- Clear governance expectations

Modernization at Scale with Deterministic Refactoring

For federal modernization, we focus on deterministic refactoring using Continuum Code.

This includes:

- Intelligent code conversion

- Pattern-based refactoring

- Dead code identification

- Architectural restructuring

This approach is deterministic, developer-governed, and measurable.

Driving predictability in modernization

Execution is strengthened through:

- Upfront complexity assessments beyond lines of code

- Mandatory integration mapping

- Realistic modeling of undocumented systems

These practices lead to:

- More defensible bids

- More predictable execution

- Stronger delivery outcomes

Accelerating Development with Continuum Design

For greenfield development and structured refactoring, Continuum Design plays a central role.

It brings together:

- Business process modeling

- Domain-driven design (DDD)

- Microservices architecture

- Structured code generation

Where it delivers the most value

- Refactoring well-understood systems

- Small-to-medium application portfolios

- Microservices and API-driven architectures

Applying the right tool to the right scenario

We carefully align its use to scenarios where DDD, APIs, and microservices are central to the effort, ensuring strong outcomes and maintaining delivery credibility.

Data Modernization and Integration with Continuum Connect

In the data domain, Continuum Connect enables:

- Data migration and transformation

- Multi-source integration

- Pipeline orchestration

Priority is placed on high-complexity environments, where automation delivers the greatest impact.

Efficiency modeling reflects:

- Integration depth

- Security requirements

- Deployment constraints

This ensures projections align with real-world federal conditions.

Cybersecurity and ATO as Scalable Services

Cyber delivery continues to evolve toward service-based models using Continuum Secure.

This includes:

- ISSO-as-a-Service

- ATO-as-a-Service

- Unit-based pricing tied to system complexity

By embedding cyber early in delivery and aligning automation to program structures, we create scalable, repeatable service offerings.

Cloud Migration with Compliance Built In

For cloud migration, Concierto provides a software-driven, AWS-endorsed model.

The playbook emphasizes:

- Post-deployment validation strategies

- Early modeling of federal compliance (FISMA High, IL4+)

- Alignment between AWS best practices and agency requirements

This approach ensures cloud modernization delivers efficiency, compliance, and architectural alignment.

Automation as a Core Delivery Capability

Platforms such as:

- PowerApps

- ServiceNow

- Google Workspace

- Copilot

are embedded directly into delivery strategies.

Efficiency timelines reflect real adoption patterns:

- 6–12 months to realize full value

- Dedicated resources included in cost models

- Strong dependency on usability and user adoption

Automation is treated as a designed capability within delivery, not an add-on.

The Bottom Line: Discipline Drives Outcomes

This playbook reflects a deliberate approach to AI adoption in federal environments.

It centers on:

- Governance

- Realistic modeling

- Scenario-based application

- Service-driven delivery

The result is predictable, measurable AI-driven efficiency, aligned to the realities of federal programs.

That discipline is what differentiates successful modernization at scale.